Apr 07, 2026

Why Generic AI Tools Fall Short in Certification Workflows

Artificial intelligence (AI) is becoming part of everyday work, and certification bodies (CBs) are starting to explore how it can support audits and certification processes. Many of these tools are general-purpose AI applications designed to generate text or answer questions. While these can be useful in some contexts, they aren’t built for the structure, data, and requirements that define certification work.

What generic AI tools are designed to do

Generic AI tools are designed to process prompts and generate responses based on broad, general knowledge. Their strength lies in producing text quickly, summarizing information, or assisting with general-purpose tasks. They aren’t embedded in certification-related environments and therefore lack the context needed to support how audits are planned, conducted, and reviewed.

When auditors prepare for an audit or assess findings, they rely on specific information such as audit history, scope, and documented findings. Generic AI tools can work with information that is manually provided, but that alone doesn’t make their output directly usable in certification work, which limits their ability to support these activities in a meaningful way.

Where generic AI falls short in certification workflows

This limitation becomes more apparent when generic AI is used in practice. Outputs may appear well-formed, but they aren’t connected to the underlying audit data. In certification workflows, where referencability, traceability, and consistency are essential, this creates clear limitations:

- No connection to certification systems: Generic AI operates outside the platforms where audits and certification activities take place.

- Limited certification context: Providing audit data manually doesn’t recreate the broader context in which audit and certification decisions are made.

- Lack of traceability: Results can’t be easily linked back to underlying data or decisions.

- Misalignment with workflows: Outputs are created outside the steps, roles, and review points that shape audit and certification work, which can require additional interpretation before they can be used.

- Unclear data handling context: When used outside certification systems, it may be difficult to ensure how audit-related data is processed, stored, and separated.

As a result, AI-generated content is hard to verify and not practical to use. Instead of fitting into the workflow, it introduces an additional layer that auditors need to validate separately.

Why this matters for CBs

For CBs, this affects how easily AI can be integrated into day-to-day work. If outputs can’t be linked to audit data or aligned with existing workflows, they introduce additional effort rather than reducing it. Auditors may need to cross-check results, re-enter information, or manually connect outputs back to the certification context.

Over time, this limits the value of generic AI tools. While they may support isolated tasks, they don’t contribute directly to the structured processes that CBs rely on. As a result, these tools remain peripheral rather than embedded in how certification work is actually performed.

How Intact AI supports certification work in practice

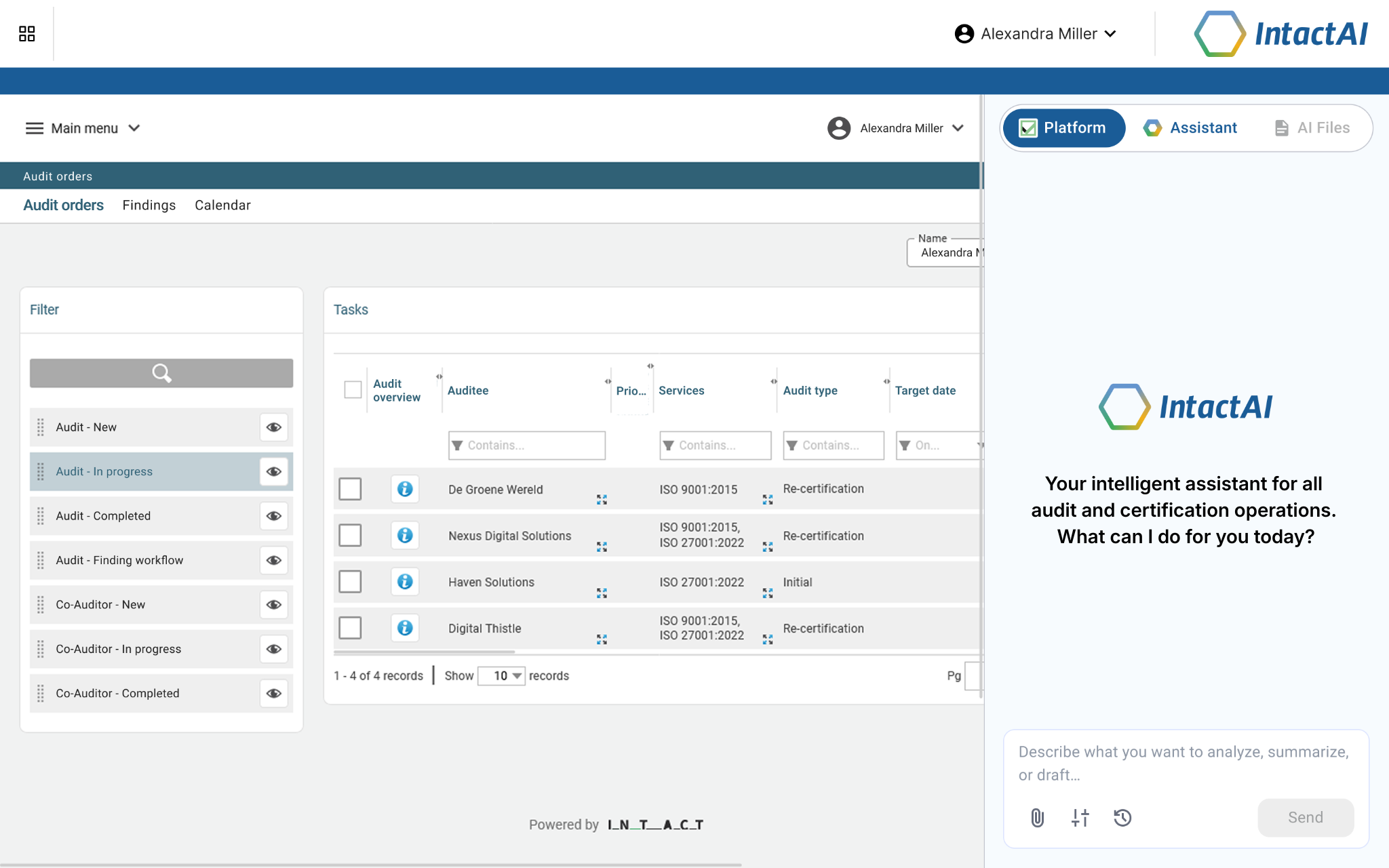

For AI to support certification work effectively, it needs to be a part of the systems where audits are managed and decisions are made. Intact AI was designed with this in mind. It’s integrated into the Intact Platform, works directly with the data stored in the system, and includes AI agents that support specific tasks. Intact AI has a clear role in certification work:

- Integration into the certification system: Intact AI operates within the Platform where audits and certification activities are managed.

- Use of audit data as context: It works with findings history, audit metadata, and client information stored in the Platform.

- Support for certification tasks: It’s designed to support audit and certification tasks rather than only generate generic text.

- Isolated customer environments: Each customer instance is isolated, with no cross-customer data sharing.

- Secure data: Customer data isn’t used to train models for other organizations.

This reduces the need for manual handoffs or additional validation. Auditors can work with outputs that are directly tied to their audit data, making them easier to review and apply in certification processes.

Choosing the right approach to AI in certification

As CBs evaluate how to apply AI, the key question isn’t whether to use it, but how it fits into their work. Tools that operate outside existing systems may offer support at a surface level, but they don’t integrate into the processes that drive audit and certification decisions.

AI becomes more useful when it aligns with how CBs are already working, within their systems, with their data, and in support of their defined processes. This shift moves AI from a standalone tool to a practical part of certification workflows.

Book a demo.

We promise to amaze you.Our experts are ready to discuss your challenges and demonstrate how you can master them with ease with Intact software solutions.

Fill out the form, and we will take care of the rest.

Our experts are ready to discuss your challenges and demonstrate how you can master them with ease with Intact software solutions.

Fill out the form, and we will take care of the rest.